High visitor numbers are important for any form of making money on the internet. But how do you get more visitors to your website? This article gives 23 detailed SEO tips that provide for better positions in the search engines, thus acquiring new visitors.

Contents

Content

Avoid Duplicate Content

Duplicate content very often leads to impairment in the search engines. The most common cause of duplicate content is that sites with both www. and without www. are achievable and deliver the same content (eg www.yoursite.com and yoursite.com) without being redirected to one of the two spellings via 301 redirect header.

Duplicate content often leads to inferior positions in the SERPs, there are two sides must share the backlinks that would normally be allotted to one side and because single (unique) content is classified by Google as superior.

WordPress users: Luckily for us WordPress users, and users of other content management systems (CMS), the choice of www vs non-www is configured automatically upon installation. However, SSL is not automatically redirected which means you may have duplicate http:// and https:// versions of your website. To fix this easily use the plugin Really Simple SSL.

Manual way: For other users with duplicate www and non-www website versions, you can place the following code in your website’s configuration file named .htaccess (a hidden file). This code will make it so that people and machines only use one version of your website instead of multiple.

Manually force the “naked url” http://yoursite.com to the www version, ex. http://www.yoursite.com or vice-versa. What you’ll want to do is create a 301 redirect forcing all http requests to use only one version or the other.

Note the code snippets included in this article assumes Apache is the web server and mod_rewrite is enabled. If you’re on shared or commercial hosting you bought for WordPress than this is highly likely, however if you are uncertain contact your hosting provider.

- Redirect yoursite.com to www.yoursite.com:

RewriteEngine On RewriteCond %{HTTP_HOST} !^www.yoursite.com$ [NC] RewriteRule ^(.*)$ http://www.yoursite.com/$1 [L,R=301] - Example 2 – Redirect www.yoursite.com to yoursite.com:

RewriteEngine on RewriteCond %{HTTP_HOST} ^www\.yoursite\.com$ RewriteRule ^/?$ "http\:\/\/yoursite\.com\/" [R=301,L]

Canonical tag: Another way to avoid duplicate content, which is Canoncial tag. The Canoncial tag is supported by Google and all major search engines. What it does is tell search engines that multiple pages of your website are part of one, which are helpful allocating duplicate content on pages, for example on websites with variations of the same product on separate URL’s

The Canonical tag is inserted as follows in of the HTML code. The following Canoncial-day would find, for example, for the URL www.domain.tld / products.html using rel=”canonical”

Select the page you consider to be the “base” of the variations and place this code in the header file:

<link rel="canonical" href="http://yoursite.com/example/" />

HTTP vs HTTPS: As said by Google, HTTPS is the future of the web and the search engine will give priority (ranking benefit) to websites using this secure connection. Anyone can make their own SSL certificate for free using Let’s Encrypt on your server. However, once installed you will want to redirect the HTTP version of your website to the HTTPS or else both pages will be achievable, thus being duplicate content in the eyes of search engines.

Force SSL manually in your .htaccess file:

Note: If you have existing code in your .htaccess, add this above other rules.

RewriteEngine On RewriteCond %{HTTPS} off RewriteRule (.*) https://example.com%{REQUEST_URI} [L,R=301]

Create Opportunities for User-Generated Content

“Content is king”. Quality content is what real SEO strives for. In essence, organic traffic is simply your website’s answer to a searcher’s query. So even if you’re not an expert in the subject you’re writing about, various platforms make it possible for your visitors to content on your page to create (User Generated Content). The following site areas are particularly suitable for user-generated content:

- Forums

- Question / answer areas

- User Reviews

- Profile Pages

- Bug reports

- Annotation function (eg blog)

Remember however, that you should check third-party content and editorial that you post no spam submissions. To do this use spam shields like native CMS antispam plugins.

Structure Your Site Hierarchy with Headings

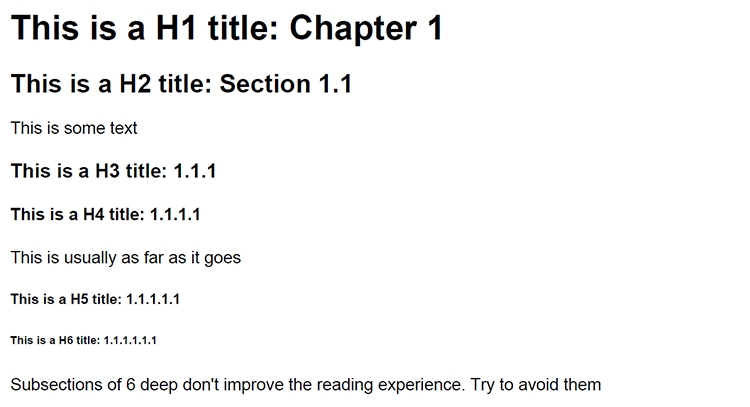

The structure of web texts with headings is important not only to ensure good readability but also has a positive impact on search engine optimization. So search engines can classify the individual text sections semantically better and pull out keywords from the headings. Another positive aspect is that a good arrangement automatically improves the logical structure of a text.

The HTML <h1>–<h6> elements represent six levels of section headings. <h1> is the highest section level and <h6> is the lowest. Usually the<h1> heading is reserved for article titles, whereas the <h2>, <h3> and subsequent headings are used to break up the structure of the article body.

Update Content on Regular Basis

Frequently updated pages are indexed often by Google, get higher positions in SERPs and get a higher quality with sufficient trust than similar pages. Often these pages are rewarded with a higher ranking.

Integrating therefore, a news section, a blog, an article directory or a forum to your website is helful to provide regular fresh content. Also, old content can always be updated with new information.

Keywords

Use Longtail Keywords on Competitive Search Terms

Longtail keywords are search queries containing a number of words. By using longtail search terms, the chance for small websites to appear in search results increases. This is due to a fact that there is less competition in these extremely specific queries

For example the longtail keyword “How to grow cannabis indoors” is pretty competitive. Searching this results in a number of high authority websites with thousand-plus word articles and good backlinks. The chance that a new website is going to outrank these initially is slim-to-nil.

However, by narrowing the search query down even further to ex. “How to grow cannabis indoors for under $500”, comes a higher chance to appear in search results, as the phrase has less frequently been covered. Thus, an effective strategy for new websites to start ranking in searches organically is to target niche-related longterm keywords.

Optimize your keyword density

Keyword density is the amount of times you write your targeted keywords on your web page. A keyword density of 1% for example, means that every 100th word is the keyword.

When planning keyword density, it is important that this is not too high and not too low. Too high keyword density (more than 10%) can be easily viewed as keyword stuffing and be penalized in the search engines with bad SERP positions. Too low keyword density (less than 0.7%), however, does not bring the best out of a text, and may prevent a good ranking, even if all other factors are optimal.

When choosing the keyword density is also important to ensure that a text is readable to humans, in order to keep them on the page reading! All to often writers target search engines purely, which leads to spammy unreadable text that helps nobody.

The optimum keyword density measured by readability and optimization is still heavily debated among the SEO community, however it’s generally recommended to be between 2% and 5%. There are various free keyword checker tools available online to asses your keyword density and go from there.

Track your Keyword Positions in the SERPs

The best optimization measures are useless if you can not monitor the success or failure because otherwise you never really know what has brought an action or if you had not perhaps achieved more by other means.

Monitoring your keyword positions in the SERPs (Search Engine Result Pages) helps you to keep track of your SEO measures, and to identify further strategies that bring you the most benefit. To monitor keyword positions use Google’s free Search Console Webmaster Tool’s

Find the Best Keywords Through Keyword Research

Keywords come and go with the times. What’s popular today in search engines may not be popular tomorrow. Make sure to take news and trends into account when planning the best keyword strategies for your website.

Also synonyms of a word can sometimes achieve higher search volumes or have less competition. With various free keyword research tools online you can search volumes and appreciate you for finding optimal keywords. However, keep in mind even the best keyword research tools do not make the most accurate estimations of search volume.

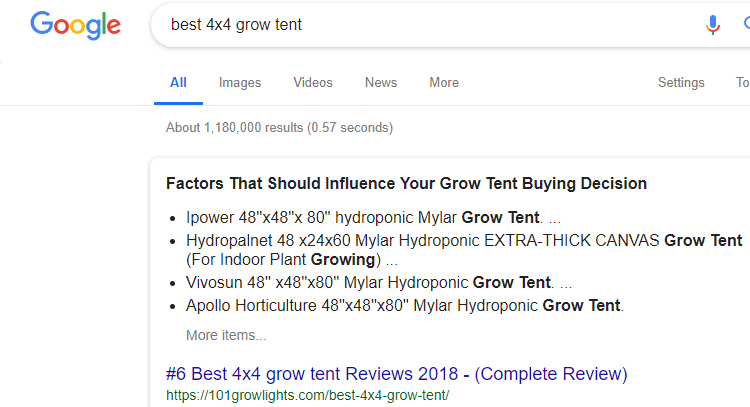

Use keywords in URL, Title and Meta Description

Keywords in the URL in the title or in the meta description should appear in bold in the Google search result. Your websites gain greater attention among visitors. In addition, the probability of a click and thus increase the click-through rate (CTR).

Take a look at the URL, title and keywords in the first result (featured snippet) on Google for “Best 4×4 Grow Tent” and learn from this example.

Backlinks, Links, Links

Operate a Steady, Organic Link Building Campaign

The number and quality of your backlinks are very important for your position in the search engines. It is therefore important that you are running a continuous link building campaign to build your backlinks constantly on.

The definition of organic backlink building is a process in which the backlinks grow in a natural way, as opposed to being blasted out in bulk using spam software. Thus, for example, we should aim to build regular backlinks every month on webpages of different trust and authority metrics, instead of once a once a year blast of 1200 backlinks, for example.

Build backlinks is not as difficult as it looks at first. We provide many backlink building services at CoxSEO.com for cannabis niche and related websites.

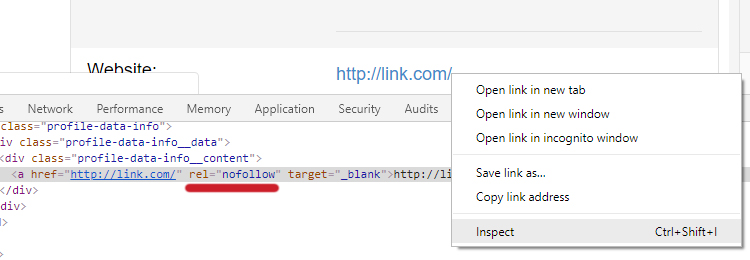

Nofollow vs. Dofollow

The nofollow HTML attribute tag rel=nofollow tells search engines that a link is not an endorsement. As a result, backlinks with the nofollow tag are not as powerful for ranking purposes as links without the tag. These nofollow tagged links are simply called nofollow links by the SEO community.

A nofollow tag is added automatically to links by many social media and other websites, including Facebook. You can check whether or not your backlink has the nofollow attribute by simply right clicking on the link in your brower to select the inspector.

Links without the nofollow tag are called dofollow links. Dofollow links are much more effective for easy increased SEO ranking, especially when coming from niche authority websites such as Leafly, ect.

Nofollow links are not useless however, especially if people are clicking on them (real traffic), and additionally they seem to serve a ranking purpose when used in mass, such as a link getting many retweets. This is a metric we call social signals. Remember that a natural backlink profile however has a mix of both high authority dofollow links and social signal nofollow links.

Redirect Links That Point to Non-Existent Pages

In the course of a site-life content or pages from time to time removed, reworked the page structure or changed URL paths. This often results in that to date, existing backlinks now to non-existent pages (status code 404 – not found). As a result these backlinks go virtually lost.

So make sure that it redirect when changing the URL paths the ancient paths still means 302 (follow) redirect to the new paths (eg via. htaccess or a CMS plugin). If pages have been completely removed, it is recommended that redirect to pages like, or if necessary to the home page.

However, only upon search engine bot crawling may you detect some of these broken URLs. These reports are available in the Google Search Console Webmaster Tools. Scour regularly the non-found (404) to detect errors in pages ready for you to redirect backlinks from old to new pages. Add the following example code in the htaccess file:

Redirect 301 / old-page.html / new-page.html

Link Out to Authority Sites

Linking out to authority websites has shown to be beneficial for ranking purposes, and also displays your knowledge and recognition of others in your niche. Don’t be afraid to link out to informative relevant articles or URLs on authority sites. Authorities on the internet are known at one or more of the following indications:

- they have a large number of backlinks

- there are a large number of blog articles about them

- they have a high Moz DA/PA Score

- they have a high Magestic TF/CF Score

- they are aged (5 years and older)

- they have high activity in social media portals

- they achieve very good positions in highly competitive keywords

- new content will be ranked well in no time

Authorities enjoy greater credibility than other pages. Through a cross-linking to authority sites you show the search engines that your item is classified as high quality. You get virtually by linking from one part of the credibility of the authorities website.

Optimize the Distribution of your Link Juice

The link juice (link juice) will be distributed in equal shares to all existing on one side internal and external dofollow and nofollow links. Make sure that you optimally controlling your link juice and are intended to steer the pages that will be ranked highly. It brings you more little example, if most of your internal links point to an insignificant side, you bring the relatively little from SEO point of view.

Security

Pay Attention to the Security Aspects of your Website

Every day, hundreds of websites are hacked. From SEO point of view not only the fact is crucial that visitors leave a hacked site immediately and criminals get to stored data, but especially that hacked sites are often deposited at Google with a security warning: “This site might endanger your computer “.

Such a warning means that almost no one calls the site more. The following basic safety practices are therefore in the operation of a website also from SEO point of view almost inevitable:

- Regular CMS Core updates (WordPress, Drupal, Magento, Prestashop, TYPO3, Joomla etc.)

- Security features/plugins that limit login attemps

- Regular extension updates

- Regular inspection of the PHP Error Logs

- Make your own PHP code online only if you know how PHP code is secure (forms, etc.)

- PHP code is checked for errors.

- Undergo code used in penetration testing

- Create an emergency plan and keep accessible (who must be notified, what happens to customer data, what to do, how do we get the page as quickly as possible online, contingency plans, etc.)

Server administrators have to deal with the matter, of course, something profound, this should consider at least the following points:

- Regular updates of important components (Apache, PHP, MySQL, etc.)

- Subscribe important security bulletins

- Regular patch deployment

- Securing the server (mod_security mod_evasive, etc.)

- Minimize the transfer of information (server signature off)

- Regular testing of important log files (access.log, error.log, user logins, system messages, etc.)

- Regular penetration testing (check the e-mail server, check the Apache server, frequent attack scenarios to play through, etc.)

- Auto capture the log files on external drives (eg via online transfer) to prevent subsequent manipulation of the log files

- possibly similar live monitoring of accesses via apachetop against DDoS attacks

- Uptime Monitoring and monitoring of all important services to directly informed in case of damage

- Prepare emergency plans and maintain accessible

If you use WordPress, I recommend using the plugin Loginizer, which will limit the amount of brute force login attempts before blocking the IP address.

Also consider local security compromises, an attack on your website can also be done about the way your PC (FTP spy access, roadmaps and prototype code stealing, etc.). Regular security of your PC is a must as a webmaster.

Performance

Optimize the performance of your pages

The loading time of a site is an important ranking factor which can decide on front or rear seats in the search results pages in Google. Google says in its Web Performance Best Practices to optimize capability, such as the on-page loading speed and mobile optimization of websites.

- Optimize caching (offline content keep available)

- Minimize Request-Response Loop

- Avoid data-ballast (overhead) (eg GZIP use)

- Traffic minimize (Responses, downloads and others)

- Optimize browser rendering

- Optimize sites for mobile devices

For the analysis and implementation of the individual points, you can use the Google PageSpeed Insights browser plugin along with Pingdom and GTmetrix page testing tools.

In addition, there are a number of server-side optimization options:

- PHP caching (eg via eAccelerator )

- MySQL Server Tuning + Caching

- faster server CPU

- latest PHP and MySQL versions use

- Avoid PHP error

- use current versions of CMS

- Server location close to the target group (see traceroute)

- Optimize connectivity and response time

Marketing and Social Media

Press Releases

Google News-placed articles can draw on their own website very high visitor traffic. However, the quality guidelines for recorded items are quite high and the positive processing a membership application usually takes a long time. Whoever produces regular (self-written) news on their website, should register their site to Google News. The decisive factor should always be the question of whether one’s own content Google News adds value.

There also exists a number of cannabis niche press release writing and distribution agencies, which may be the better option for many such cannabis websites.

Social Media

Social media portals also offer you the opportunity to reach a very large range with good content and interesting tools in a short time. In particular, the possibility of slight proliferation (“Like”, “Retweet”, “+1″, guerrilla marketing, etc.) can social media make a meaningful pillar of your search engine optimization. The following social media portals you should know and use regularly for the dissemination of your articles and content:

- Facebook (sometimes bans cannabis pages)

- Google+ (soon to be depreciated)

- Tumblr

- Pinterest (careful, they ban cannabis websites)

- YouTube (often bans cannabis channels)

- Instagram (probably the most cannabis page bans)

- and many others

Other Considerations

Keep an Eye on your Bounce Rate

The bounce rate (also bounce rate) are as defined by your Google Analytics information as to how many visitors leave back a page after just one page view. Depending on analytics software also short visits between 5-10 seconds are included in the bounce rate.

However a high bounce rate is not necessarily a bad thing for users. For example if a visitor views your webpage and gets the answer they were looking for, well that is a success. What’s worse then simply a high bounce rate is an action we call “pogo-sticking” which is when a user quickly clicks the back button and goes to another listing in the search results.

You can decrease pogo-sticking by enticing users to read more on your page. Try avoiding annoying pop-ups, flashy images, quicker loading speed among other page optimizations (better web design, improved user friendliness, thematically better terms, grammar, interesting content, etc.).

Improve your Availability (Uptime)

The percentage of time that your website is available is referred to as uptime. Conversely, downtime is that time in the website is unreachable.

Therefore, it is important when measuring the uptime, the site access not only himself in the browser, but to resort to external tools that can access from different locations on a website. An ideal tool for measuring the uptime is the Pingdom website monitoring , which is free in the standard version.

Optionally you can be notified by e-mail by Pingdom event of any downtime directly, so that can be taken quickly. Pay with your SEO to a high uptime, there may adversely affect too many losses on your positions in the search engines (high Downtime = not so qualitative service). The uptime should be at least 99.5%.

Local SEO is important for businesses that cater to local areas. Local deals are displayed in the Google search above the regular search results. By using Schema markup and installing GEO coordinates on your website, you can quickly move up in the SERPs according to your locality.

Rich Snippets to Stand out Better in the SERPs

Microformats (also called Rich Snippets) expand search results with additional information and help them better information semantically classified. For example, next to an article an author photo can be displayed or incorporate the rating of an article in the display in the search engine results with. Google so far supports rich snippets for the following content types:

- User Reviews

- People

- Products

- Companies and organizations

- Recipes

- Events

- Music

The use of microformats is quite simple. A complete documentation for the Google microformats and markup data can be found here .

Check your Links Regularly with a Link Checker

Links that lead nowhere, frustrate not only your visitors but also leave existing link juice in to nirvana to disappear. Check your websites therefore regularly with a backlink checker tool such as provided in Search Console, or third party link checkers like Ahrefs, Moz and SEMRush.

Avoid a Bad Neighborhood

The IP address of your website in a bad neighborhood is that an indication can be for search engines that your content have a not so high quality. Free web hosting and hosting for explicit websites are often bad neighborhoods to host your website in. You can check what other sites are with your website on the same IP address using reverse IP lookup and research further to see the quality of neighboring websites, whether or not they are on a blacklist. The following events may indicate that a site is considered bad neighborhoods:

- Security warning on the site in Google search

- Site was in one or more Blacklist (s) entered (eg malware blacklist abuse blacklist etc.)

- The website only has obvious SPAM content

Conclusion

SEO is already no longer just simple SEO. Many aspects of other web, usability, programming, security, and marketing fields have begun to play with into the search engine optimization. This trend is set to continue because search engines take into account more and more of these other factors. It is therefore imperative to deal with the full web area if you want to run a long-term search engine optimization.

Of course, not all points that best practices be any search engine optimizer, and website operator. I have collected these in the hope that they will help beginners and professionals may provide new ideas.

Leave a Reply